SDNY Ruling: Why 'Private' AI Isn't Enough for Privilege

View the Original SDNY Ruling:

A recent ruling in the Southern District of New York (SDNY) has direct implications for how law firms use AI. In United States v. Bradley Heppner (Case 1:25-cr-00503-JSR), Judge Jed Rakoff ruled that using a consumer AI interface (Anthropic’s Claude) waived attorney-client privilege over the documents generated.

The judge’s reasoning focused on three key areas:

- No "Reasonable Expectation of Privacy": Judge Rakoff noted that Anthropic "explicitly advises its users in its Privacy Policy... that it collects data on the 'prompts' entered and 'outputs' generated; that it uses this data to 'train' its AI tool."

- Third-Party Disclosure Waiver: The court found that users have a diminished privacy interest in conversations that are "voluntarily disclosed to [an AI company] and which [the AI company] retains in the normal course of business."

- Lack of Duty: Crucially, the judge stated: "The AI tool that the defendant used has no law degree and is not a member of the bar. It owes no duties of loyalty and confidentiality to its users."

For most law firms using AI, this creates a significant legal risk. For UnitizeAI users, the architecture is fundamentally different.

1. Re-establishing the "Reasonable Expectation of Privacy"

The SDNY ruling focused on the fact that standard AI conversations are stored and subject to review. If an AI provider has the right to look at your data for training or safety, the court rules that you have waived the "confidentiality" required for privilege.

UnitizeAI does not use a "Chat" interface. Instead, we use a transient processing model. When we send a document for unitization, the AI has no memory or "history" of the interaction. Once the document is processed, the data stream is closed and nothing remains in the AI's environment. This technical configuration ensures that no third party—including Google—has the right to use your data for training or review.

2. Eliminating the Third-Party Disclosure Risk

The core of the Heppner ruling was that sharing data with an AI platform acts as a disclosure to a non-privileged third party that doesn't owe you a "duty of loyalty." UnitizeAI solves this through Zero Data Retention (ZDR) and strictly isolated environments.

Per Google’s official ZDR Documentation:

"Google does not use your prompts (including associated system instructions, cached content, and files such as images, videos, or documents) or responses to improve our products."

By using a pipeline that forbids training and mandates immediate deletion, we ensure that the interaction is purely technical and transient—mirroring the same "agent of the attorney" status held by traditional, non-waiving eDiscovery vendors like Relativity or Concordance.

We ensure a true zero-data footprint by architecting our pipeline to avoid stateful features. We do not use conversation history (Interactions API), long-term file storage (File API), or Search/Maps Grounding—all of which could potentially trigger the 30-day retention periods noted in standard policies.

3. The Approved Exception: Zero Prompt Logging

There is a catch in almost every AI provider’s privacy policy: Abuse Monitoring. As Google’s documentation states:

"For Paid Services, Google logs prompts and responses for a limited period of time solely for detecting violations of the Prohibited Use Policy."

In a court of law, this "limited period" (typically 30 days) is a third-party disclosure that can waive attorney-client privilege. To solve this, UnitizeAI applied for and was granted an explicit exception to this policy.

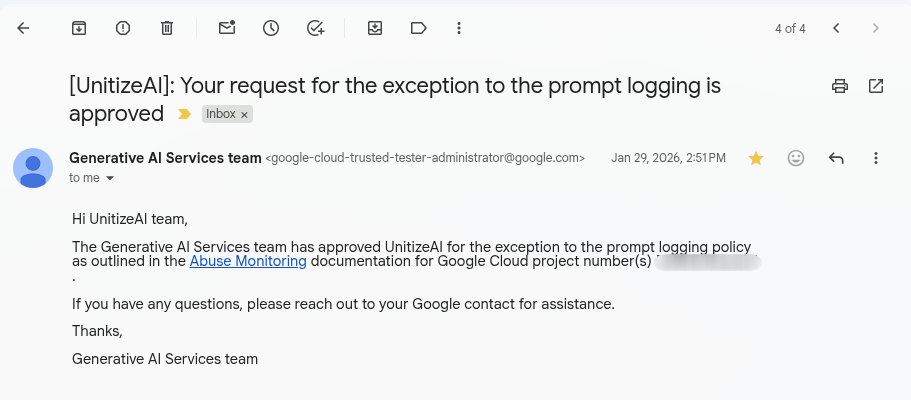

On January 29, 2026, the Google Generative AI Services team officially approved UnitizeAI for a full exception to the prompt logging policy.

This means:

- Zero prompt logging.

- Zero abuse monitoring retention.

- Zero human review.

When a document enters our pipeline, it is processed in an isolated environment, sent through an API with no logging, and then the entire environment is destroyed.

4. The Ultimate Option: 100% Self-Hosted & Airgapped

For the most sensitive matters—where even a stateless API is a bridge too far—UnitizeAI offers an optional, fully offline pipeline.

In this mode, we do not use an external API at all. Instead, we launch a self-hosted LLM directly on a dedicated, isolated GPU within your job's private VPC.

- No external traffic: Data never leaves the VPC for inference.

- No third-party logs: Not even Google sees the prompts.

- True Airgap: The model weights live on the local disk of the worker VM.

5. Provable Isolation (The Audit Shield)

A judge doesn't just want to hear that a tool is secure; they want proof. Our Isolation Audit Report provides a cryptographically signed trail of the exact environment your data lived in. It proves that the VPC (Virtual Private Cloud) was locked down and egress was blocked.

The Verdict

The SDNY ruling is a reminder that standard AI privacy terms are often insufficient for legal work. You need Privilege-Grade AI.

By combining Zero Data Retention, an approved Logging Exception, and an optional Offline Pipeline, UnitizeAI ensures that your work product remains exactly what it was meant to be: Privileged.

Process Documents Without Risking Privilege

UnitizeAI is built for firms that need AI-powered document processing without the privilege exposure. Zero data retention, zero prompt logging, and cryptographically verified isolation — every job, every time.

Try UnitizeAI today and see how privilege-grade document processing works.